Updated: June 9, 2023.

Is your site experiencing a traffic loss? Are you wondering if it’s because of the Google algorithm update? If your answer is YES, then this is the guide just for you.

Google has recently been very fruitful when it comes to the number of confirmed algorithm updates. However, that does not necessarily mean that your site’s traffic is down due to one or more of these updates.

There are lots of possible causes of traffic loss… other than an algorithm update. And, obviously, to be able to fix the situation, you need to know the real why which is exactly what I will be exploring in this guide.

Let’s get started! Here are 15 ways (plus one bonus way) to check if your site’s traffic is down because of an algorithm update or something else.

❗If you are new to SEO, don’t miss my SEO basics guide and my list of SEO tips.

1. Make sure the site is not experiencing server errors

If your site’s server is returning status codes 5XX, then something is definitely off, and, yes, you may be experiencing a traffic loss as a result.

If server errors continue for more than 2-3 days, Google may start to deindex your site starting with the pages that are crawled the most (the most popular web pages usually drop first).

Here is how to check whether your site is having server issues:

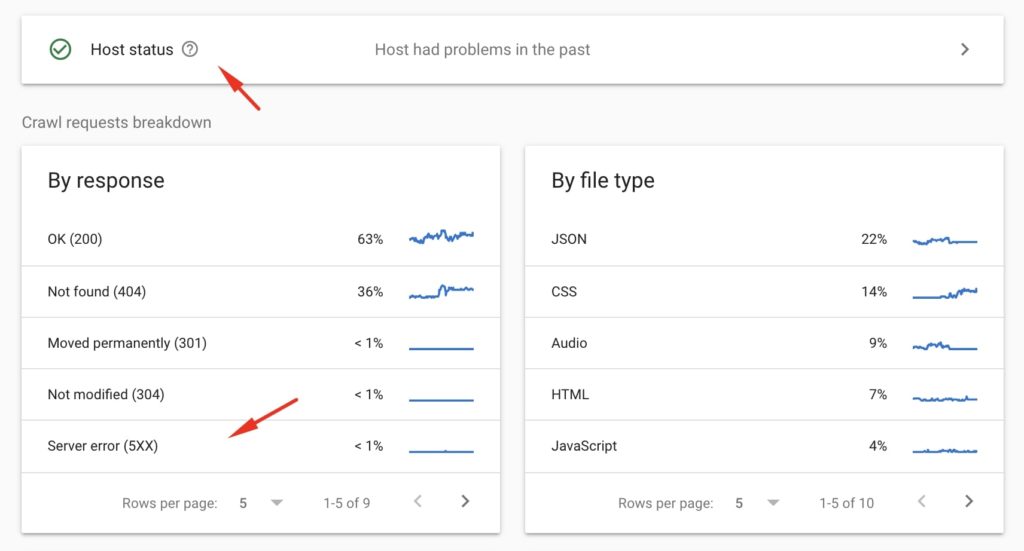

- Check the crawl stats report in Google Search Console and see what’s under Host status and the Crawl requests breakdown. If there are issues with the host and you see a huge percentage of responses Server error (5XX), then you should contact your host to learn why.

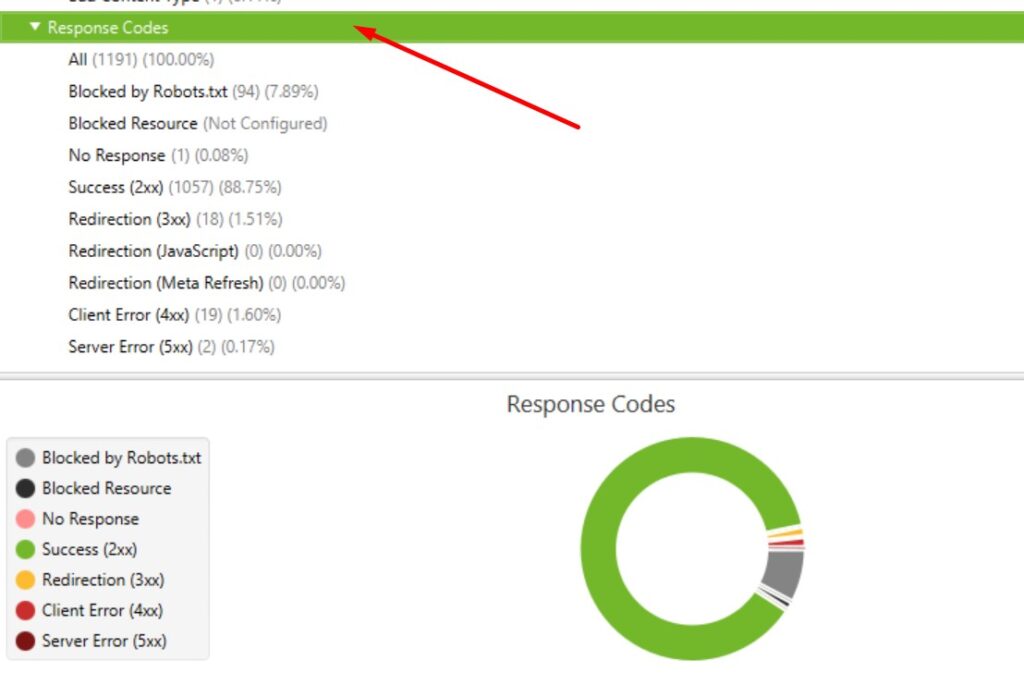

- Crawl your site with Screaming Frog or Sitebulb to check if there are pages returning 5XX server errors.

- Simply visit a couple of pages on your site and see if they load correctly. If the situation is quite serious, you may come across 5XX without much effort from your side. This is the situation I was in when I was still using Bluehost😡 and which made me change the hosting provider.

If this is indeed the server that is to blame, then you need to contact the hosting provider and get guidance and support. If you are using some cheap shared hosting plan, then you should seriously think about moving to a dedicated server.

2. Exclude common technical SEO errors

If your traffic suddenly falls off the cliff, then it may be due to some serious technical SEO error. The quickest way to check if that’s happening is to perform a full crawl of your site with either Sitebulb or Screaming Frog.

Things to watch out for include:

- Noindex and nofollow tags

- Robots.txt directives that, for example, block the entire site from Google

- Cacanoncal tag issues, such as canoncalizing all web pages to the homepage (I once saw such a case)

- Password protection of the areas that should be visible to users and search engine robots

Technical errors are usually quick to fix and there is a huge chance that the rankings and traffic will return quickly once Google recrawls the site.

☝️ Don’t miss my notes from the Google SEO basic guide with the Google best practices for SEO.

3. Make sure that the site’s speed and performance are not absolutely dismal

Speed is one of the hundreds of ranking factors and more of a tie-breaker but if the site’s speed and performance are absolutely horrible, then – yes – it may cause a drop in traffic.

Google may demote or abandon crawling a site if it’s taking forever to load. Users may lose their patience and return back to SERPs, which Google will also see.

Here is how to check if the site’s speed is causing issues:

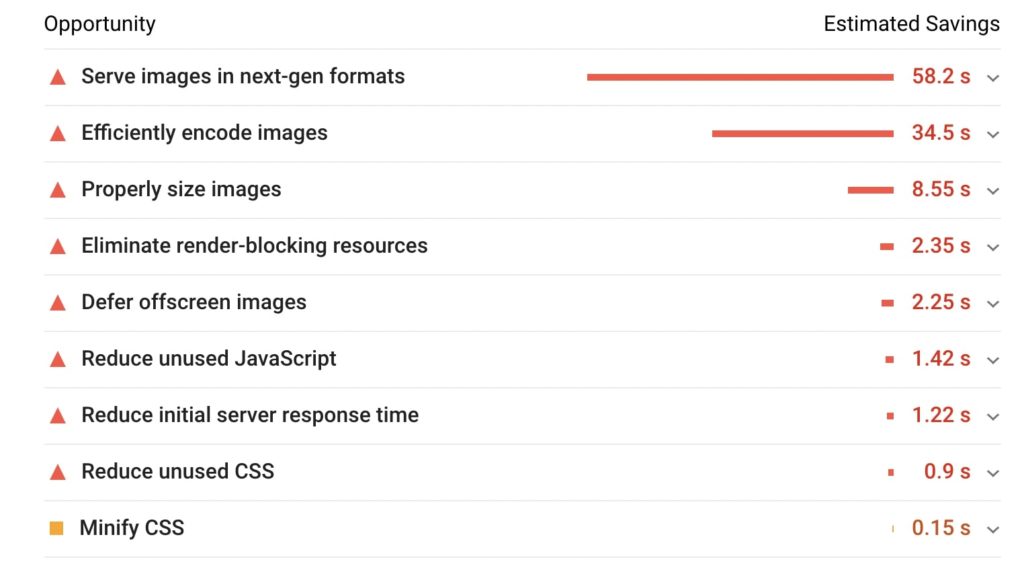

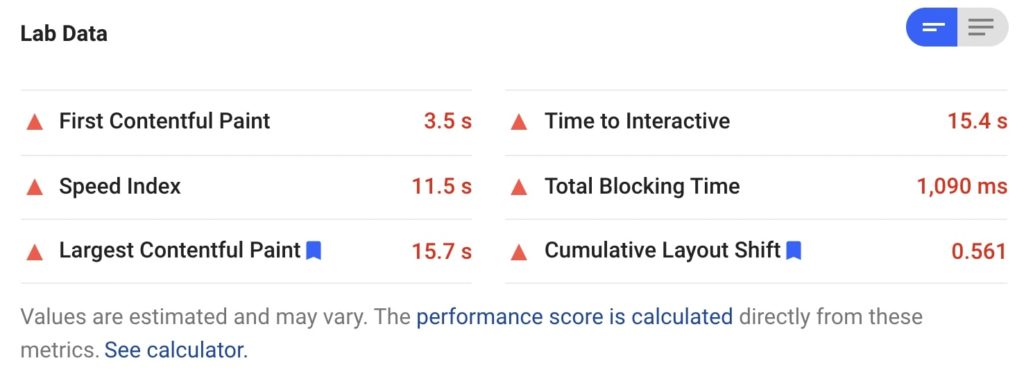

- Test the site with Google PageSpeed Insights and pay special attention to anything marked in red. If everything is in red and the site’s score is close to 1/100, then it may be the speed to blame. Below is an example of a site I have recently been auditing and the speed scores it got in PSI.

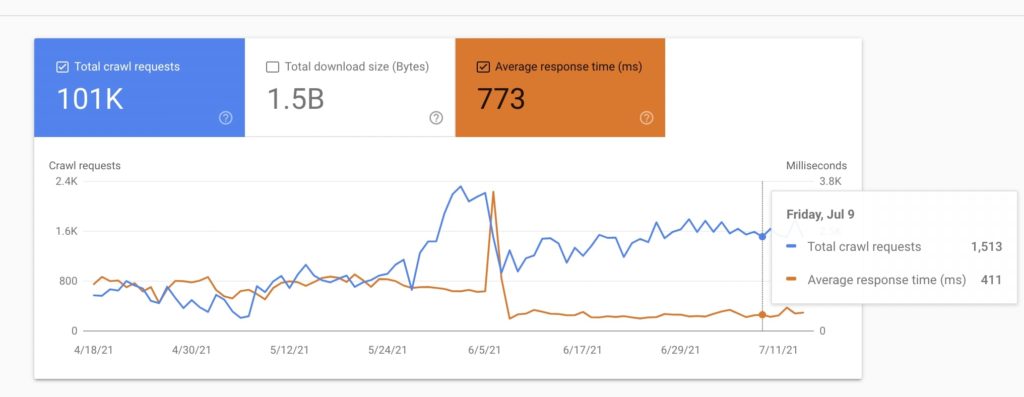

- Check the GSC crawl stats report and check the average response time. Are there any spikes? The spike below was the moment when I lost my patience with Bluehost and moved to a different server.

- Simply open your site and check how it’s loading. If the situation is critical, you will notice even on a high-speed internet connection.

In addition to performing regular speed and Core Web Vitals optimizations, it can very often make a huge difference if you simply change the server and move to a fast dedicated solution. This is what I did and all speed and performance-related problems on my site magically disappeared.

4. Make sure that the site’s page experience isn’t absolutely dismal either

Google Page Experience, just like the above-mentioned speed, is also one out of many ranking factors and more of a tiebreaker. However, if it’s absolutely dismal, then the site may experience a traffic loss.

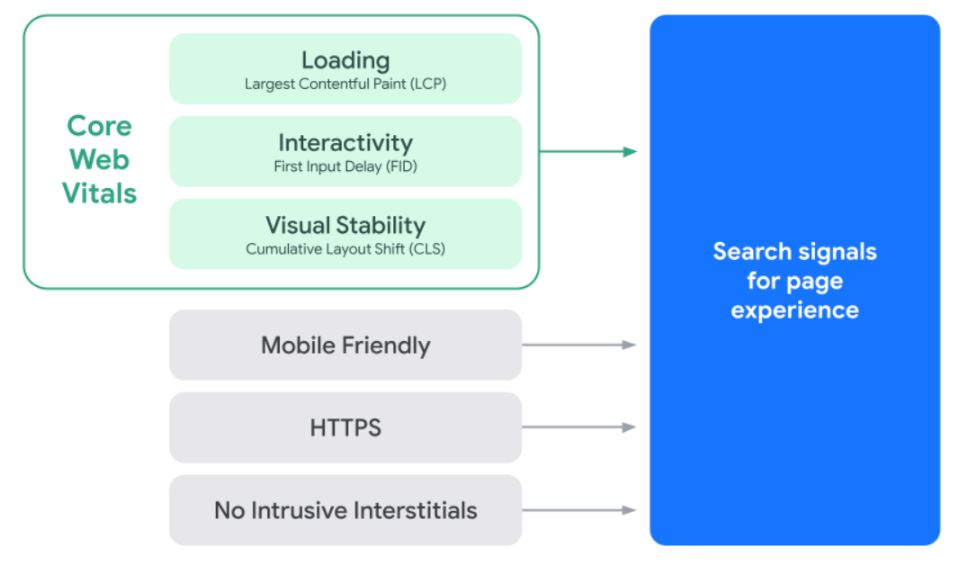

Just for the reminder, the Google Page Experience update started rolling out in mid-June and will be fully rolled out by end of August. The page experience signals include Core Web Vitals, HTTPS, mobile-friendliness, and no intrusive interstitials.

Here are the situations where having a bad page experience may hurt a site and cause a traffic loss:

- Your Core Web Vitals are tragic like, for example, LCP and/or FID is close to 8-10 seconds or more. They are so tragic that it is hard to normally use a site. Below are the scores that are indeed dismal.

- Your site has a horrible ad experience and Google is penalizing it for having too many intrusive interstitials.

- You are an e-commerce shop, and you don’t have HTTPS.

If your traffic loss started shortly after you implemented very aggressive ads, then you should look into that further.

You can check the actual status of your Ad Experience Report in the old part of GSC.

5. Analyze the traffic in Google Search Console

If your site’s experiencing a traffic loss, the first place to check the details is Google Search Console. GSC should be your number one SEO tool for diagnosing any traffic drops because its whole purpose is to provide the data on the organic traffic, visibility, and indexability of your site.

Google Analytics (GA), on the other hand, shows you the data about all your users and all traffic sources, including, for example, referral or paid traffic. That’s why when it comes to SEO data, Google Search Console is the tool to go.

To confirm that this is indeed the organic traffic that has dropped, go to the Performance report in Google Search Console. Remember, however, that there is a few day’s lag in GSC, and the freshest data you can see there is from two days ago.

6. Check if it is the organic traffic that actually went down

To get the whole picture of why (and if) your site may be losing traffic, you need to analyze the situation with a few different tools.

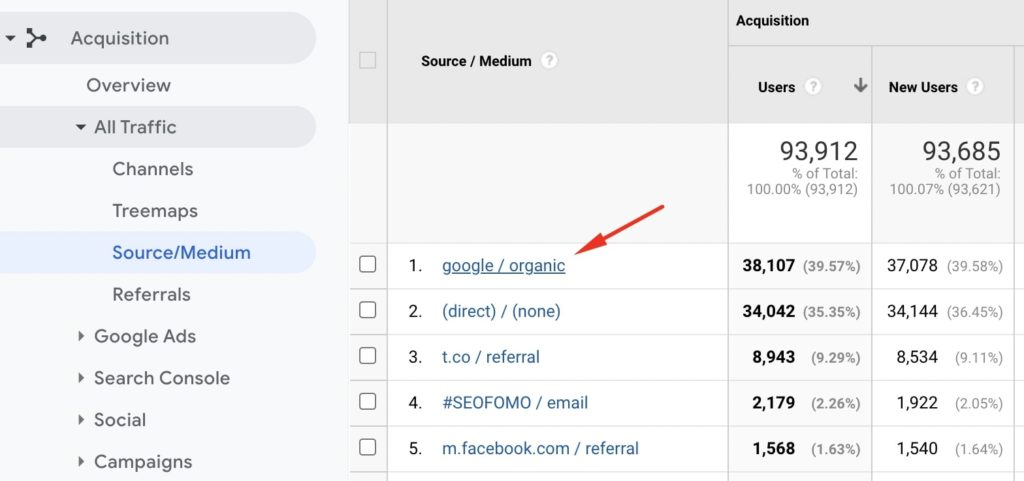

One common mistake that I often see is that people only look at the Google Analytics unfiltered traffic view. If they see a drop there, then they ascribe it to the Google algorithm update, which is not always the case.

Not only is GA not the right tool to analyze the SEO traffic (see the point above), but its unfiltered view can be skewed a lot by, for example, bot traffic.

The default traffic view that you see in GA once you log in shows all traffic sources. The only way to be really sure that it is indeed Google that has issues with your site is to check the organic traffic in Google Analytics. To do that, go to Acquisition > All Traffic > Source/Medium and google / organic.

That’s why you always need to analyze any potential traffic drops using a variety of tools (GSC, GA, Ahrefs, Semrush, etc.) and metrics (earnings, conversions, etc.).

7. Check the visibility of the site in Semrush or/and Ahrefs

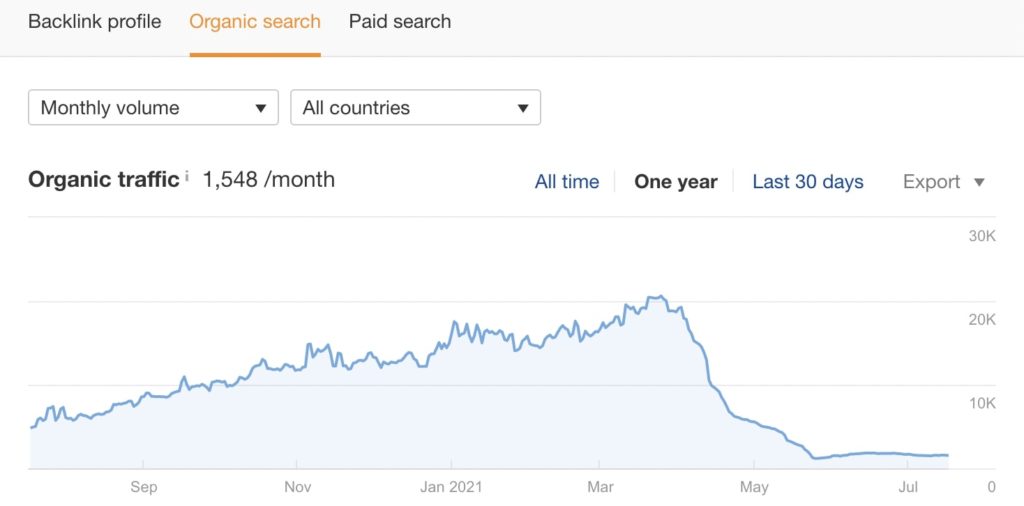

Semrush and Ahrefs are usually very quick to notice that the site is experiencing an organic traffic loss. Of course, these tools only provide estimates and are not free of errors but if the site’s positions and visibility are falling off the cliff, these tools usually pick that up.

If Ahrefs or Semrush shows a huge visibility loss (in the organic traffic section) and you have ruled out other possible reasons, then this may indeed have something to do with Google shifting their algorithms and relevance.

Checking the site with these tools is especially important if you don’t have access to the GSC data.

8. Compare the organic traffic from Google vs Bing

One of the ways to check if the traffic loss is caused by the Google algorithm update is to compare organic traffic between Google and Bing.

If the traffic trajectory is stable in Bing and has fallen only for Google, then it is very likely that Google has problems with your site. If you see the drops both in Google and Bing, then it may be a technical issue that is causing this.

Here is how you can compare traffic from Google with traffic from Bing:

- In GA, go to Acquisition > All Traffic > Source/medium and choose google / organic and bing / organic. Note that if your site is not having a significant amount of traffic, you may not be able to rely on this data too much.

- Use Bing Webmaster Tools and compare what you see there with what you see in Google Search Console.

Keep in mind that your Bing traffic is surely many times smaller than the traffic from Google and you are only looking for a similar pattern or lack thereof.

❗ Don’t miss my list of Google SEO tools.

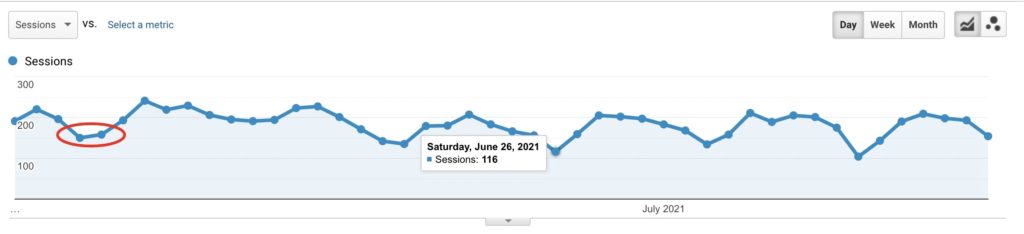

9. Exclude seasonality and/or holidays

A very trivial and simple reason why your site may be experiencing a sudden traffic loss is seasonality and/or holidays.

- For example, for e-commerce sites, it is totally normal to always experience traffic drops over the weekend and see spikes on Monday.

- The same is true for holidays, such as Christmas or the 4th of July. Your traffic may indeed fall off the cliff on those dates (depending on your niche) and it’s totally OK.

- If your site is a very seasonal niche like golf, then you may feel as if you have been penalized by Google in the winter months. That’s because fewer people are interested in and actively looking for information and products relating to this sping/summer activity.

Seasonality is completely normal and does not mean that Google has issues with your site.

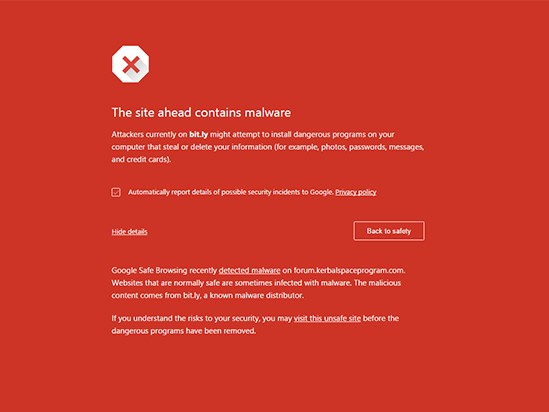

10. Check if there are security issues

Security or Google Safe Browsing is one of the above-mentioned Google Page Experience signals but it’s so important that it deserves a separate section in this guide.

If your site has serious security issues, then Google may demote it in search to protect users, and instead of showing your site Chrome will display an ugly red warning.

If the site is having security issues, then Google Search Console will notify website owners about that via e-mail. These issues will also be clearly visible in the main view in GSC and in the Google Search Console Security report section.

Google Search Console reports on the following three types of security issues:

- Hacked content (malware, code injection, content injection, URL injection)

- Malware and unwanted software (deceptive pages, deceptive embedded resources, harmful downloads)

- Social engineering (unclear mobile billing, possible phishing detected on user login, uncommon downloads)

If your site is experiencing security issues, then it should immediately become your top priority to clean this up.

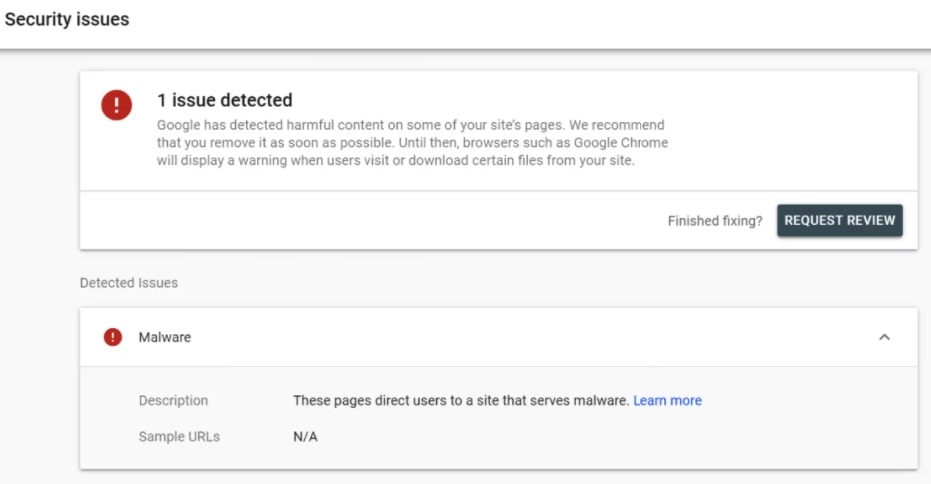

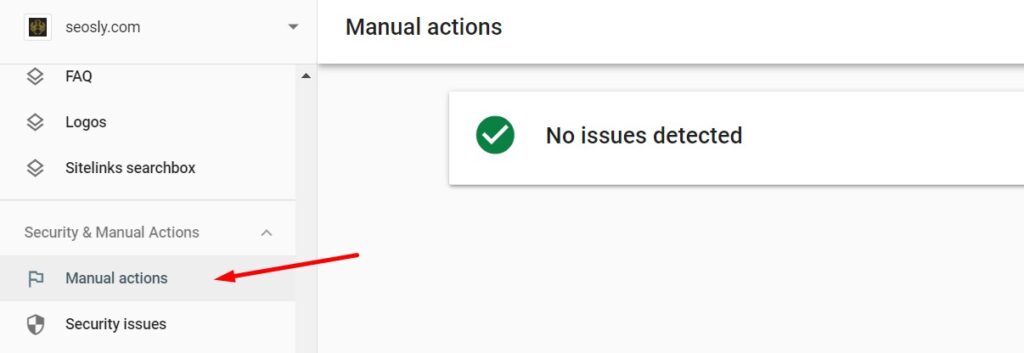

11. Check if the site has a manual action

If a site has a manual action, some of its pages or even the entire website may totally disappear from Google.

If this is the case with your site, then you will also see the notification in Google Search Console (right after you log in), get an e-mail, and see that information in the Manual Actions report.

Here are the types of manual actions that Google may apply to sites:

- Site abused with third-party spam

- User-generated spam

- Spammy free host

- Structured data issue

- Unnatural links to your site

- Unnatural links from your site

- Thin content with little or no added value

- Cloaking and/or sneaky redirects

- Pure spam

- Cloaked images

- Hidden text and/or keyword stuffing

- AMP content mismatch

- Sneaky mobile redirects

- News and Discover policy violations

Just like with security issues, if your site indeed has a manual action, then it should become your number one priority to clean this up and file a reconsideration request. Note that you won’t see a notification if your site has been punished algorithmically.

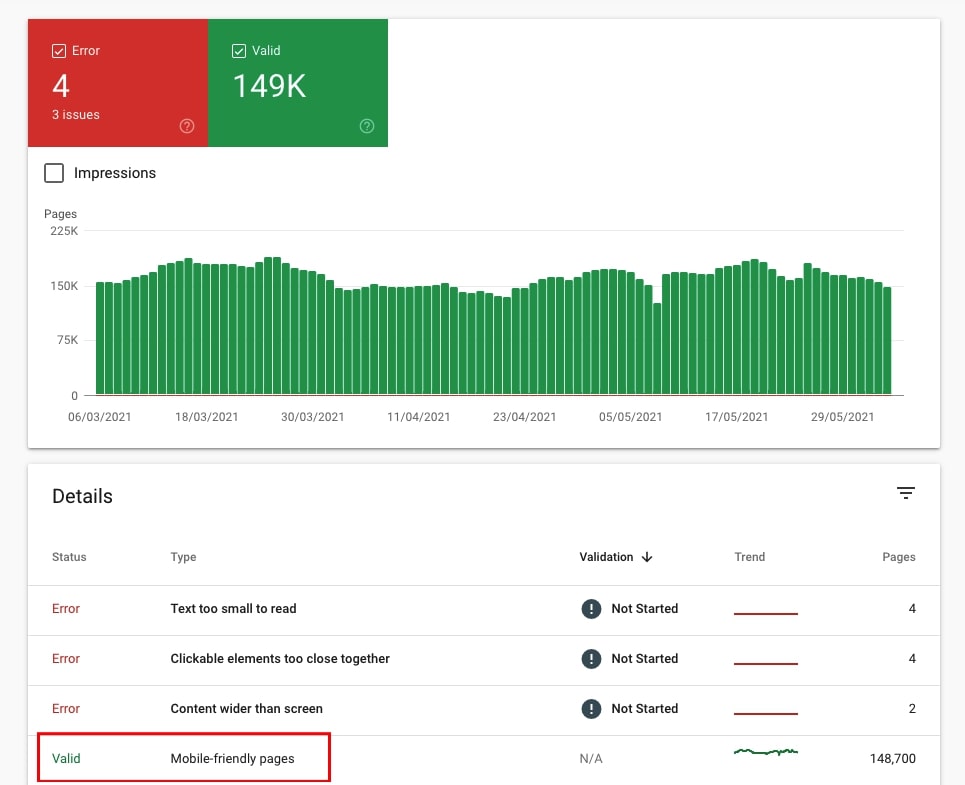

12. Check if the site is mobile-friendly

If the site is not mobile-friendly or it is a nightmare to use it on a mobile device, then Google may demote it in SERPs.

The site may as well stop being mobile-friendly or responsive because of some technical issue and – as Google is using Google Smartphone as the main crawler for practically all sites right now – this may cause a traffic drop.

You can check if the site is experiencing mobile-friendliness issues in the Mobile Usability report in Google Search Console.

GSC will report on the following mobile usability issues:

- Using incompatible plugins

- Viewport not set

- Viewport not set to “device-width”

- Content wider than screen

- Text too small to read

- Clickable elements too close together

If your traffic drop correlates with the date when mobile usability issues started to pop up, then you definitely want to take a deeper look into that.

13. Check if there has been a redesign or migration done recently

Whenever you are auditing a site and looking for the reasons for a traffic drop, you should start by asking your client if there has been a migration or redesign done recently.

Here is how you can check whether there have been some serious changes made to the site:

- Use the Wayback Machine and check how the site looked before the traffic loss. If it looked totally different, then you probably have the answer.

- Analyze the organic visibility in Ahrefs or Semrush, paying special attention to broken backlinks which may indicate the pages that might have been improperly redirected or (accidentally) removed.

If the redesign resulted in serious structure changes, then – yes – it may lead to a traffic drop. If the migration has not been done correctly, then it can also wipe the slate clean for the website in terms of organic traffic.

14. Check if there has been some major developmental work done on the site recently

This point is a bit related to what I talk about in the section about ruling out possible technical SEO errors.

I personally saw at least a few examples of sites that lost tons of traffic after a developer (who is not into SEO very much) introduced some seemingly small changes and, for example, put the noindex and/or nofollow tag on the entire website or flipped the staging site with the production site too early.

If a traffic drop follows some development work on the site, you should definitely dive deep into what exactly has been done.

15. Check if the decline in traffic correlates with the decline in conversions/earnings

Google is constantly updating its algorithms and shifting relevance. I see it time and time again that sites lose traffic and organic visibility for certain keywords without really losing money or/and the number of conversions.

If that’s the case with your site and your earnings and/or conversions stay stable for a couple of weeks with the decreased traffic, then your site is probably totally OK. Google has just cut the traffic that wasn’t really relevant to your site. That’s good!

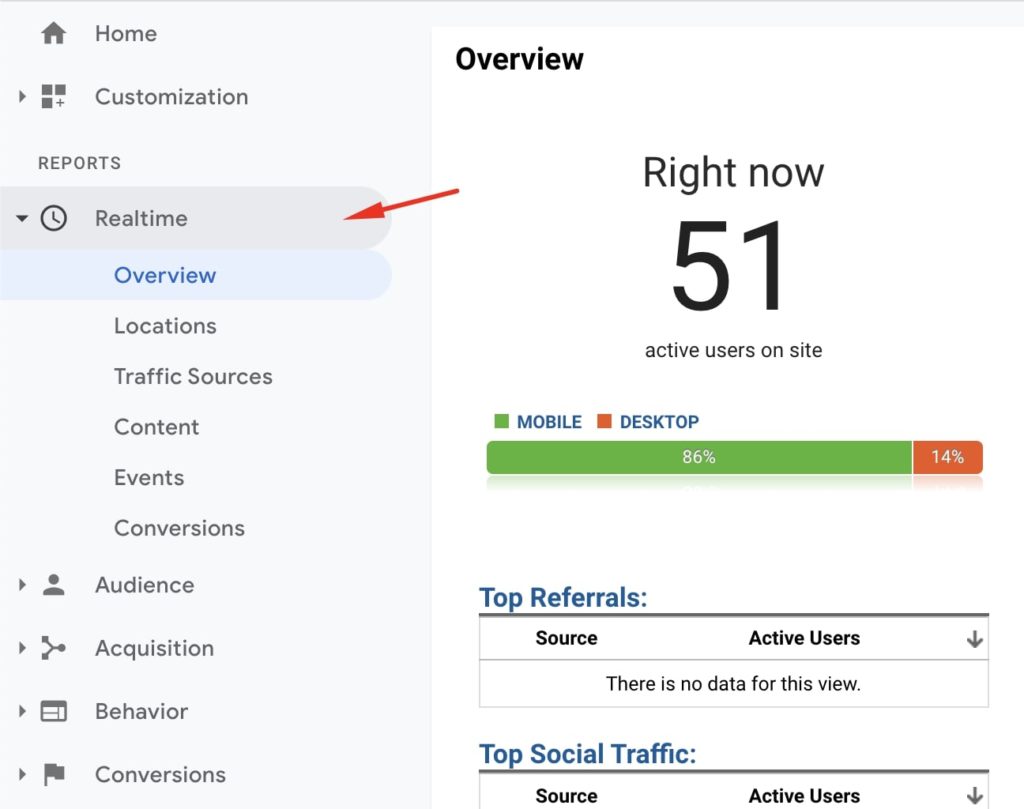

Bonus: Check if Google Analytics code is actually working

This is a very trivial and stupid reason but it happens too often, especially if the site is using advanced caching and/or Cloudflare. If suddenly your traffic falls down to zero, then you should make sure that the GA code is actually working. A quick way to do that is by opening your site on your mobile phone and then going to the live traffic section in GA. If you cannot see your session there, then your GA code may be off.

The problem with GA is that it won’t tell you if it stops working. Google Search Console, on the other hand, will alert you when your site is no longer verified.

Comparing GSC traffic with GA is also a way to verify if the GA code is working or not. If GSC shows traffic and GA does not, then again the GA code needs fixing (not your website).

❗ Don’t miss my basic Google Analytics 4 guide.

What if it is indeed the Google core algorithm to blame?

If your site’s traffic declined because of the algo update, then your task should be to find out what your site needs to work on. In most cases, it is not just one thing that you need to fix but rather work on the site’s overall quality, relevance, and E-A-T (Expertise, Authoritativeness, and Trust).

Wherever there is a Google core algorithm update, Google recommends that we read their post about Google’s core updates. This post (and your honest answers to the questions it contains) together with the Google Quality Raters’ Guidelines should be your mandatory reading if your site has lost traffic as a result of the Google core algorithm update.

If you like this article, you will also like my other similar articles:

Very good guide, Olga! From the basics to more advanced stuff.

Thank you for putting it together for us.

Hello Paulo, thank you so much for your comment. I am very happy you like the article 🙂

Great article for beginners.

I’m surprised you didn’t mention checking server logs (depending on the server technology).

GA is great but not the best in terms of accuracy due to privacy settings, browsers etc.

Still, everything else is what I’d check.

I’d also like to point of that I’m extremely impressed you mentioned seasonality.

Not a lot of SEO’s include this in their reports.

I have clients that excel in the winter and suffer in the summer or vice versa due to their product ranges but that can be resolved quite easily by including all-year round products.

Last thing to add would be to exclude certain types of pages from the “Acquisition > Source/Medium” GA report you mentioned.

For example, has traffic to blog pages dropped or is it category pages? Maybe it’s a specific product range etc. Run a year-on-year comparison, exclude subfolders such as /blog or /category in the “advanced” section and you’ll have a more detailed view of your traffic.

This is an article that I was looking for. First create the content, then optimize the content with High Frequency keywords, make seo audit afterthat and check the traffic.

That’s the way it works. At least at present 🙂

Techniques that you have listed in this post are really very effective to recover the organic traffic of a website.

Thanks Olga, for sharing this valuable information with the world.

Hello Danish! Thanks for stopping by and commenting. I really hope this will help as many people as possible.