Updated: July 1, 2023.

A quick guide on how to get your site crawled by Google (and lots of practical tips).

Let’s discuss an essential part of SEO – ensuring your site gets crawled by Google. If you’re new to this, you might ask, “What does crawling mean?” In simple terms, crawling is Google’s process of analyzing your website.

Think of Google as a digital explorer. Its bots, commonly known as Googlebots or spiders, crawl through your website, examining each page, link, image, and piece of content they encounter.

Now, why does this matter? Crawling is the first step in making your site visible in Google’s search results. If Google isn’t aware of your website, it can’t display it to users. Therefore, getting your site crawled by Google isn’t just a nice-to-have – it’s a must for your SEO efforts.

So, let’s dive deeper into understanding how to encourage Google to effectively crawl your site.

TL;DR: How to get your site crawled by Google

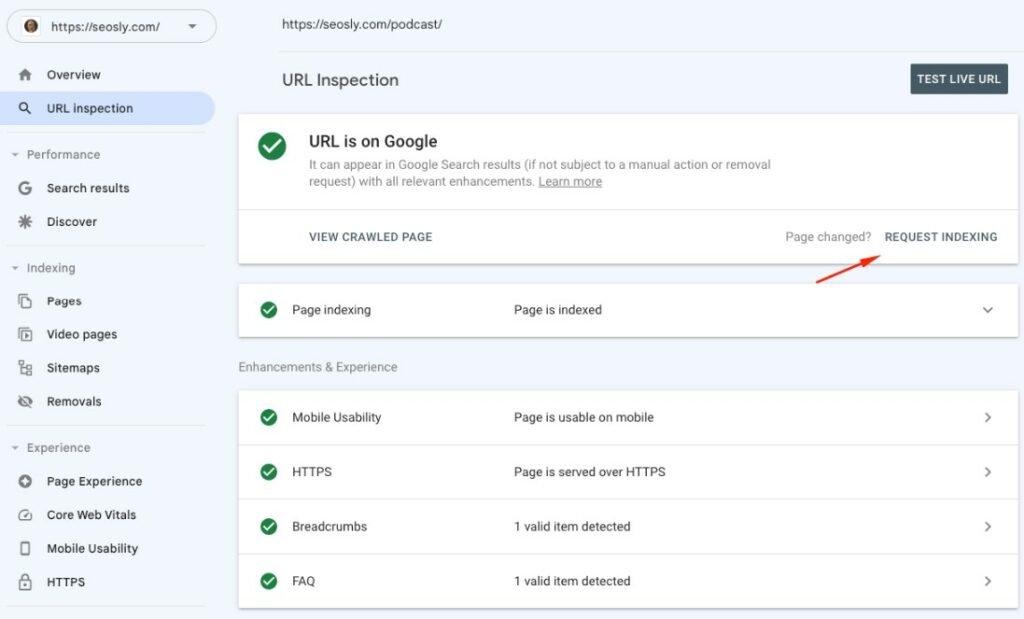

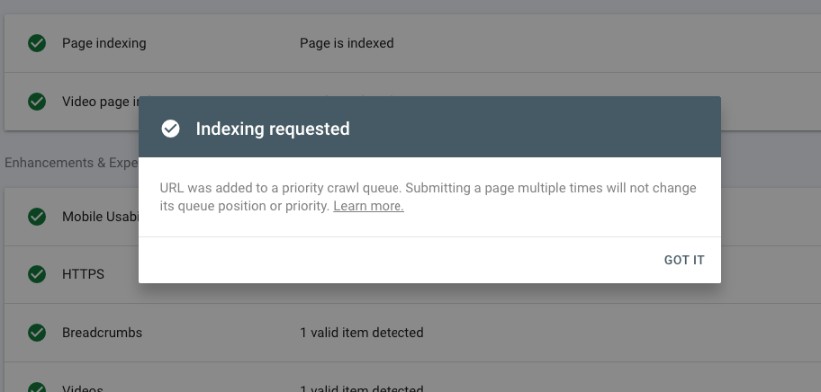

The quickest way to get your site crawled by Google is by using Google Search Console’s “Request indexing” feature. Simply add your site to Google Search Console, go to URL inspection, enter your URL, and hit the “Request indexing” button. This will prompt Google to visit your site.

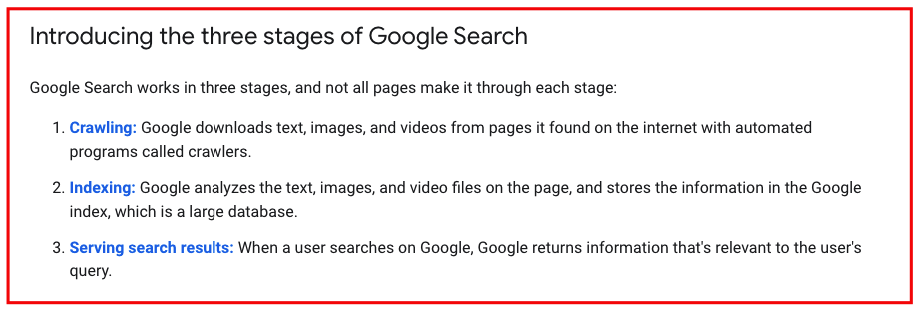

Google’s crawling process in a nutshell

Before we dive into the nitty-gritty of getting your site crawled by Google, let’s take a moment to understand what we’re dealing with.

How Google’s crawling and indexing works

You see, Google’s web crawler, known as Googlebot, has a big job. It endlessly roams around the internet, hopping from one webpage to another through links, looking for new and updated content. When it finds these new or updated pages, it examines and evaluates them. This process is called crawling.

Next, Googlebot prepares a massive index of all the words it sees and their location on each page. Additionally, it processes information included in key content tags and attributes, such as title tags and ALT attributes. This second part of the job is known as indexing.

Check my articles about indexing:

- How Long Does It Take Google To Index A New Website?

- How To Check If A Page Is Indexed

- “Indexed, Not Submitted In Sitemap” is now “Indexed” in “Unsubmitted Pages Only”

- How To Check If Google Is Indexing JavaScript Content Correctly

Factors that influence Google’s decision to crawl a site

There’s no fixed schedule for when Googlebot will swing by your site for a visit. It’s more like an impromptu drop-in from a friendly neighbor.

However, some factors can influence the frequency of these visits, including:

- Website size: Larger sites, with more pages, might see more frequent crawling simply due to the sheer amount of content available.

- Website popularity: If your site has a high number of inbound links from reputable sources, Google sees this as a signal of a quality resource, potentially leading to more frequent crawling.

- Site speed: A site that loads quickly is easier for Googlebot to crawl, which can result in more frequent visits. But don’t get too hung up on this.

- Content freshness: If you’re constantly updating your site with new, relevant content, Google will drop by more often to keep its index updated.

How to get your site crawled by Google in 9 ways

Here are 9 ways you can use to get Google to crawl your website. However, you need to be aware that neither crawling nor indexing is guaranteed by Google.

By doing these things, you are simply prompting Google to crawl your site but – ultimately – it’s Google decision whether to crawl (or index) or not.

Use Google Search Console to get Google to crawl your site

Getting Google to crawl your site can seem like a game of waiting and hoping. But, with Google Search Console (GSC), you can actually take a more proactive role. Here’s how.

Use the URL Inspection Tool to check and request Google to crawl a URL

The URL Inspection Tool in GSC is your go-to gadget for a quick status check on a URL. Here’s a step-by-step guide on how to use it:

- Log into your GSC account and select your property (website).

- In the left sidebar, click on ‘URL inspection’.

- Enter the URL you want to inspect.

- After running the inspection, you’ll see the status of your URL. If it hasn’t been crawled yet, click on ‘Request indexing’.

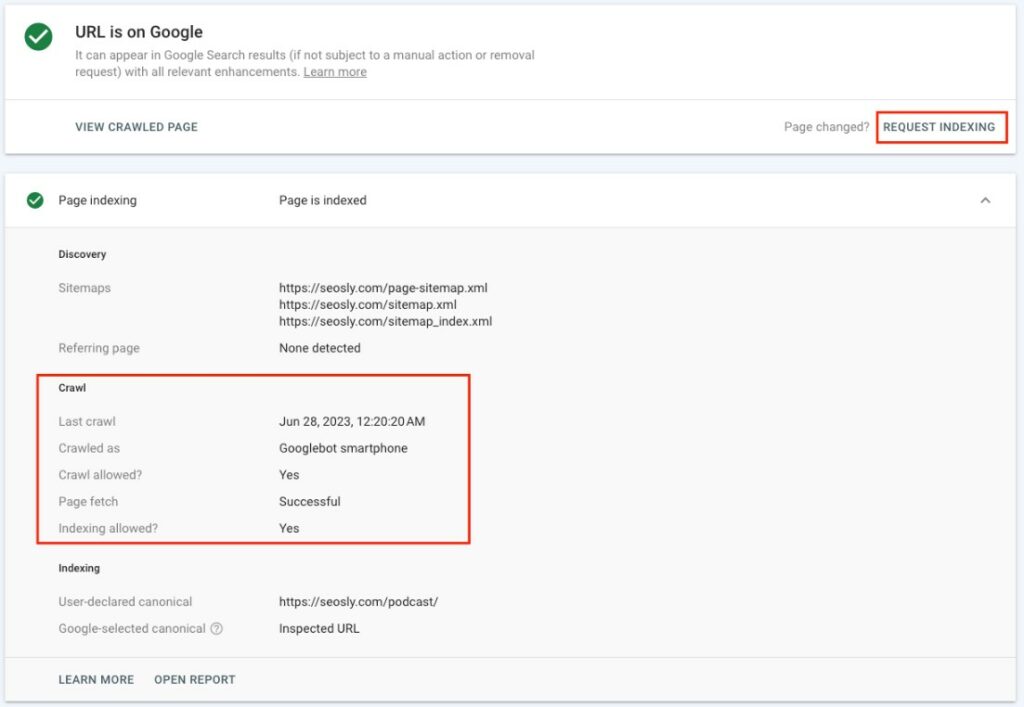

TIP: Open ‘Page indexing’ accordion to learn exactly when Google last crawled the site. You will see that information next to ‘Last crawl.’

This button sends Googlebot an invite to come and crawl your page. But remember, it’s just a request. Googlebot’s visit isn’t guaranteed.

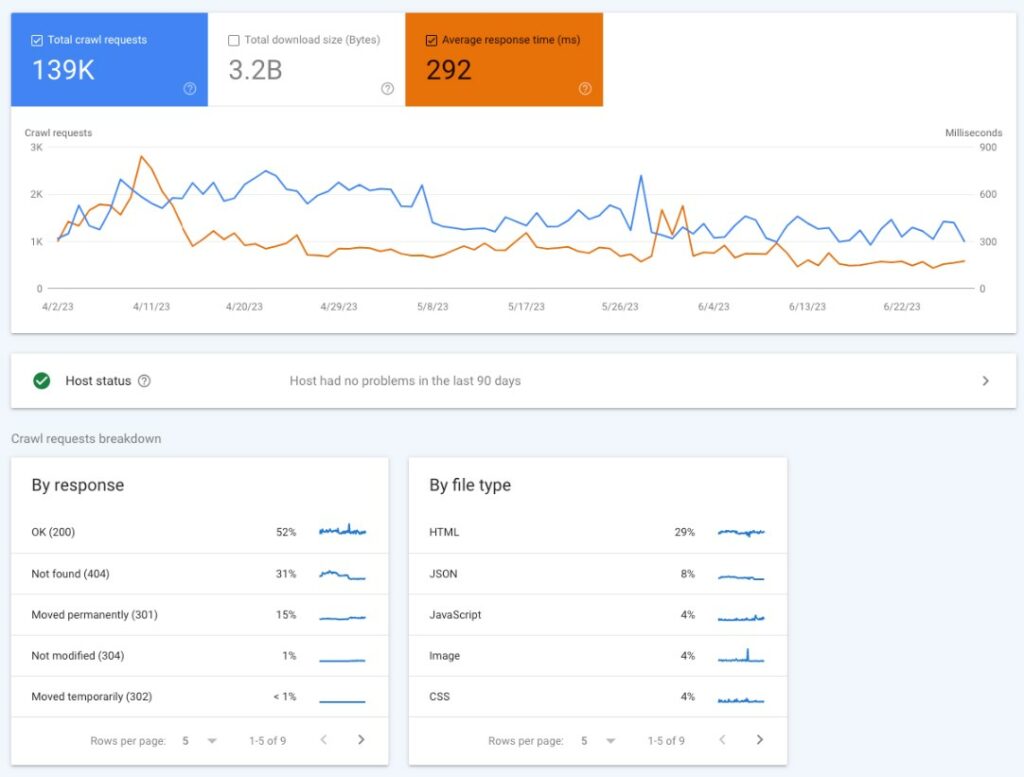

Check the Crawl Stats Report for Understanding Google’s Crawling Activity

The Crawl Stats report in GSC offers an in-depth view of how Google has been crawling your site. Here’s how to access this report:

- Log into your GSC account and select your property.

- In the left sidebar, click on ‘Settings’ and then ‘Crawl stats’.

This report will show you the total number of crawl requests, total download size, average response time and details about crawl requests (like responses, file types, Googlebot type, and so on).

Note that this is not the entire picture (this is just a meaningful sample) and it does not contain the information about other crawlers (like the one from Bing or other search engines).

I have an in-depth guide to Google Search Console Crawl Stats, so I recommend you check it.

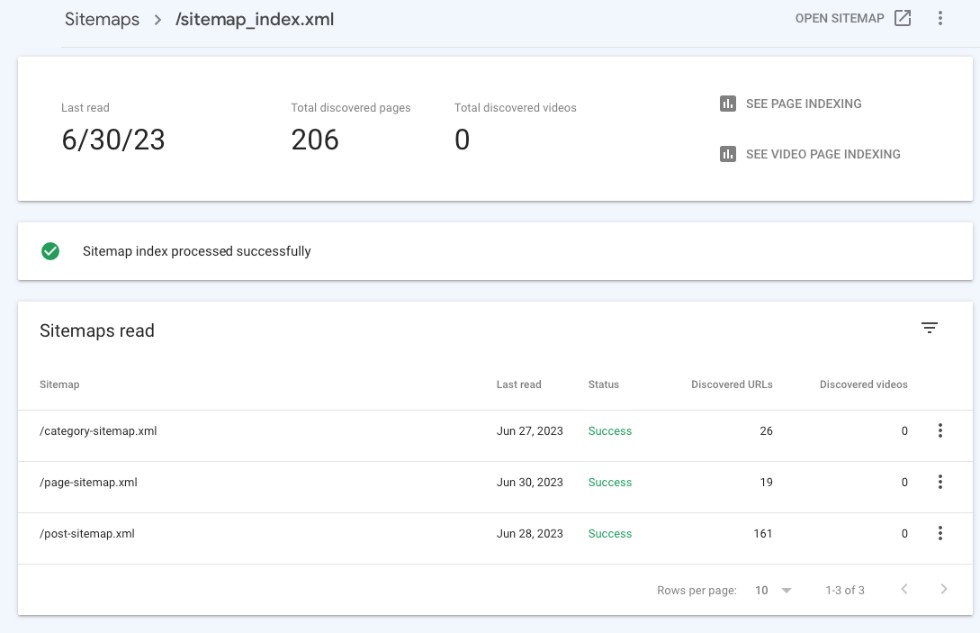

Submit an XML sitemap

Submitting an XML sitemap through GSC is like giving Googlebot a map of your site. It lists all the important pages on your site, making it easier for Googlebot to discover and crawl them. Here’s how to do it:

- Prepare your XML sitemap. Make sure it follows the protocol defined by sitemaps.org.

- Log into your GSC account and select your property.

- In the left sidebar, click on ‘Sitemaps’.

- In the ‘Add a new sitemap’ box, enter the URL of your sitemap and click on ‘Submit’.

This tells Google about your sitemap and encourages it to crawl the pages listed. Make sure to keep your sitemap updated with any new pages you want Google to crawl.

Other ways to ensure Google crawls your site

Catching Google’s attention to crawl your site can be a bit tricky. But, with these straightforward steps, you can make your site more crawl-friendly.

Keep your sitemap correct and updated, and make sure Google knows about it

Your sitemap is the roadmap of your site. It shows Googlebot where to go, guiding it to all your important pages. Make sure your sitemap is error-free, updated, and submitted to Google via Google Search Console. Regularly check and maintain your sitemap, especially after adding new pages or removing old ones.

I also have a more detailed article on how to submit a sitemap to Google if you need more details or a guide on how to audit an XML sitemap to make sure you are making the most of it.

Use Robots.txt correctly

Your robots.txt file is like a traffic director, guiding Googlebot where it can and can’t go on your site. It’s essential to ensure you’re not blocking pages you want indexed and crawled. Also, consider blocking resources that may waste crawl budget, particularly for large sites.

Maintain a logical site structure

An organized and clear site structure is not only user-friendly but also crawler-friendly. Ensure your site is logically organized, with clear navigation and internal linking. This is especially critical for large websites where proper structure can help Googlebot efficiently crawl your pages.

Prioritize mobile-first indexing

Since Google has switched all sites to mobile-first indexing, it’s imperative to ensure your site is mobile-friendly. This means your site’s mobile version should be as comprehensive and user-friendly as the desktop version.

Regularly update your content

Frequent content updates signal to Google that your site is alive and relevant. Whether it’s blog posts, new products, or updates to existing pages, keep your content fresh and up-to-date.

Optimize page load speed

A fast-loading site not only provides a better user experience but also encourages Google to crawl more of your pages. Use Google’s PageSpeed Insights tool to check your page load speed and get suggestions for improvement.

TIP: Don’t become obsessed with speed as it does not really translate into better rankings. Just make sure your site is not painfully slow. Have a very common sense approach here.

TIP 2: If you use WordPress, in 99% of cases WP Rocket will solve all your speed-related issues and will let you focus on things that really matter (i.e. content creation).

Acquire links from other sites

Backlinks signal to Google that your site is reputable and valuable, encouraging it to crawl your site. Focus on earning high-quality, relevant backlinks that will naturally lead Googlebot to your site.

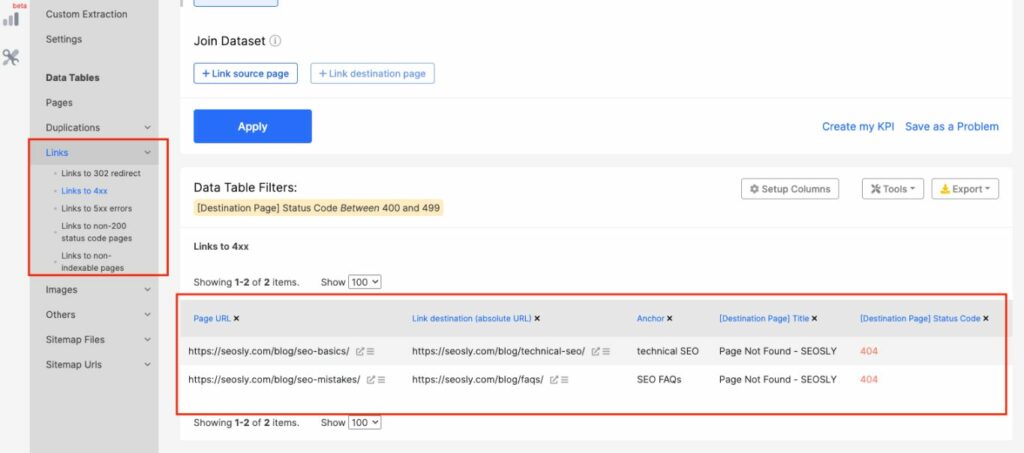

Avoid serious technical SEO errors

Major technical SEO errors, like redirect loops or 404 errors, can prevent Google from crawling your site. Regularly audit your site for technical errors and fix them promptly. TIP: Tools like Semrush, SE Ranking or JetOctopus can help you audit your site and spot some technical issues before Google notices.

Common Issues Preventing Google from Crawling Your Site

There are several reasons why Google might have trouble crawling your site. Let’s take a look at some of the most common issues:

- Blocked by robots.txt: Your site or certain pages on your site may be blocked by a directive in your Robots.txt file. This prevents Googlebot and other search engine crawlers from accessing and crawling those pages.

- 404 errors: Pages that return a 404 error are not crawlable. These errors can occur when pages are removed or their URLs are changed without a proper redirect in place.

- Server errors: If your server frequently goes down or if it’s slow to respond, Googlebot may have trouble crawling your site.

- Site is too slow: If your site is too slow to load, Googlebot may give up and move on before it’s finished crawling. As I said, this mostly applies when your site is painfully slow.

- Misuse of meta tags: Incorrect usage of noindex or nofollow meta tags can also prevent Google from crawling your site.

Frequently Asked Questions (FAQs) on how to get Google to crawl your site

Here are the most often asked questions about getting Google to crawl your website.

What does it mean for Google to crawl my website?

Google “crawls” websites to discover new or updated pages and content. This process involves Googlebot, Google’s web spider, visiting and reading your webpages, following links, and gathering data for Google’s index. It’s the first step in getting your website visible in Google’s search results.

How can I get Google to crawl my website?

To get Google to crawl your website, you should submit an XML sitemap through Google Search Console, ensure your website is structured well and is easily navigable, has high-quality and unique content, a strong backlink profile, and fast loading speed. You should also ensure your site is mobile-friendly as Google prefers such sites.

How do I know if Google has crawled my website?

You can check Google Search Console’s crawl stats report to see when and how often Google has crawled your website. Alternatively, you can use the URL Inspection tool within Google Search Console to see if a specific page has been crawled and indexed.

Can I request Google to crawl my site?

Yes, you can request Google to crawl your site or a specific page through the URL Inspection tool in Google Search Console. After inspecting a URL, you have an option to “Request Indexing” if the page is not on Google or is significantly updated.

How can I improve my website’s crawlability?

You can improve your website’s crawlability by maintaining a clear and simple site structure, optimizing page loading speed, ensuring all pages have internal links, and making your site mobile-friendly. Also, avoiding broken links and ensuring your robots.txt file is not blocking important pages can help improve crawlability.

Why isn’t Google crawling my website?

If Google isn’t crawling your website, it could be due to several reasons such as server errors, blocked URLs in your robots.txt file, slow loading times, a lack of internal and external links, or having a poor site structure.

Can frequent website updates prompt Google to crawl my site more often?

Yes, regularly updating your website with fresh, high-quality content can potentially prompt Google to crawl your site more often. However, content updates are just one of several factors that influence Google’s crawl frequency.