Updated: September 15, 2022.

Here are the most important SEO takeaways and SEO tips from Google I/O 2021.

I binge-watched the SEO sessions at Google I/O 2021. This part of Google I/O is especially useful for SEOs who want to update and refresh their knowledge and learn new things.

I really learned a LOT and here I want to share with you the notes I took while watching the SEO sessions at Google I/O. Enjoy!

❗ If you are new to SEO, don’t miss my SEO basics guide. And be sure to check the list of example technical SEO issues.

The Google I/O SEO sessions I analyzed

The takeaways come from the following 4 sessions from the Google Search Central + Web at Google I/O playlist:

- What’s new in Search

- Preparing for page experience signals

- What’s new in Web Vitals

- The business impact of Core Web Vitals

I strongly recommend you watch all of these sessions.

The most important SEO takeaways & SEO tips

I took notes of practically everything Googlers shared with us, so probably you will find a lot of knowledge refreshers in these notes. There are a lot of screenshots from the presentations.

☝️ Be sure to check the list of SEO best practices based on the basic SEO guide from Google.

What’s new in Search

A large part of technical SEO is about making it possible for search engines to fetch your HTML pages and to understand the content hosted there.

- Fetching in the SEO language is called crawling.

- Crawling is similar to how developers use wget or cURL to request the page.

- After the page has been crawled and rendered to process any JavaScript, the results are then parsed.

- Parsing in the SEO language is called indexing.

- Headings and other texts from the DOM are extracted and stored as tokens for an index of URLs.

- Search engines use various machine-readable elements to interpret the content on the page and to decide whether to store the page in the index or skip it.

- Structured data are one of these elements that provide additional information about the page and its content.

- In order for Google to understand the links, they should be well-formed HTML elements and point at reasonable and fetchable URLs.

- With the processing, Google also looks for links to new pages (both internal and external)

Updates in Crawling (HTTP/2)

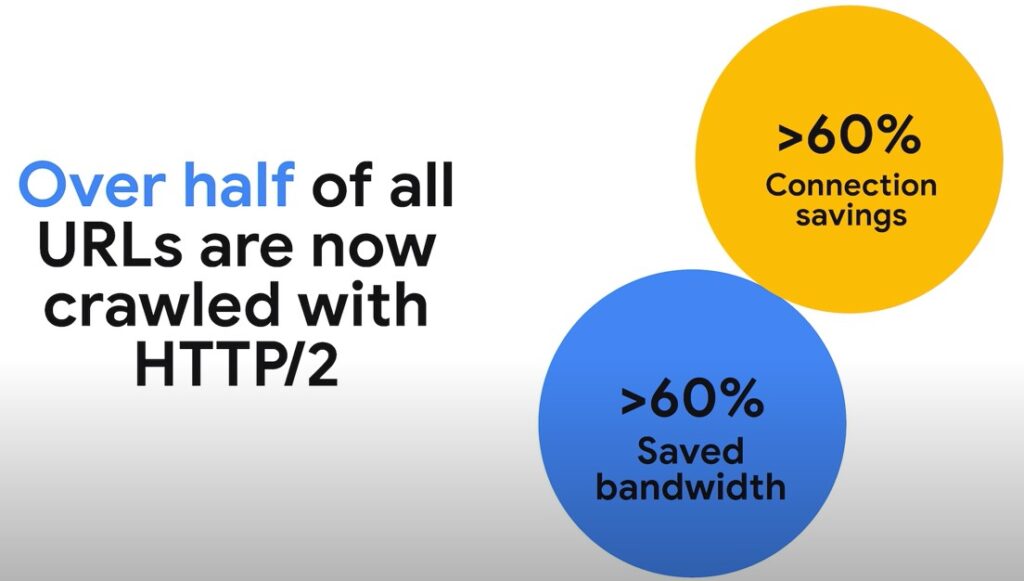

HTTP/2 is the next major version of HTTP (the protocol used for transferring data on the internet).

- Google has been crawling over HTTP/2 since November 2020.

- HTTP/2 has been enabled to make crawling more efficient.

- With HTTP/2 Google can open a single TCP connection and efficiently request multiple files in parallel so that Google does not need to spend as much time crawling the server as before.

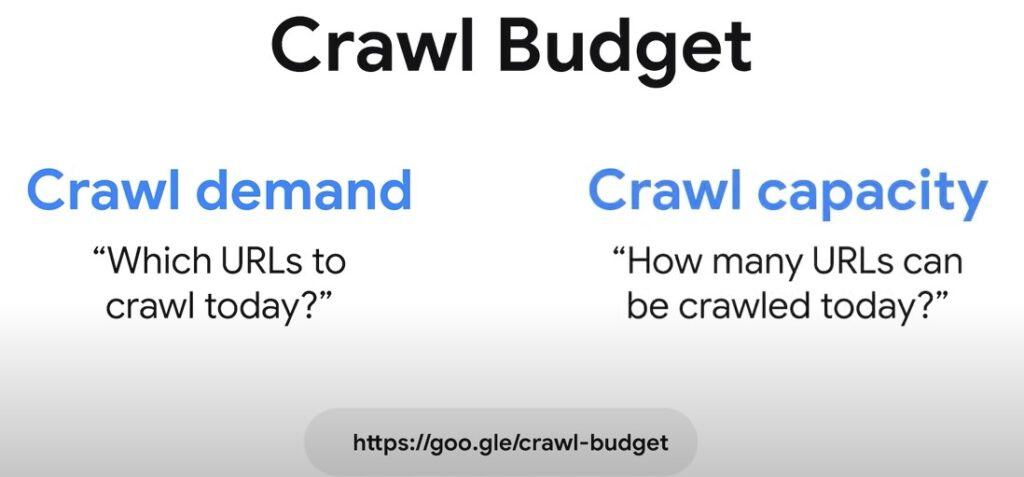

- Crawl budget is a mix of how many URLs Google wants to crawl from your site (the crawl demand) and how many URLs Google systems think your server can handle without problems (the crawl capacity).

- HTTP/2 crawling allows Google to request more URLs with a similar load on the server.

- The decision to crawl with HTTP/2 is based on whether the server supports it and whether Google systems determine there’s a possible efficiency gain.

- Website owners do not need to do anything other than enable HTTPS and HTTP/2 support on their web server.

- Over half of all URLs are now crawled with HTTP/2. Thanks to stream multiplexing and header compression, the number of connections and the bandwidth have both gone down significantly.

Links:

Structured Data

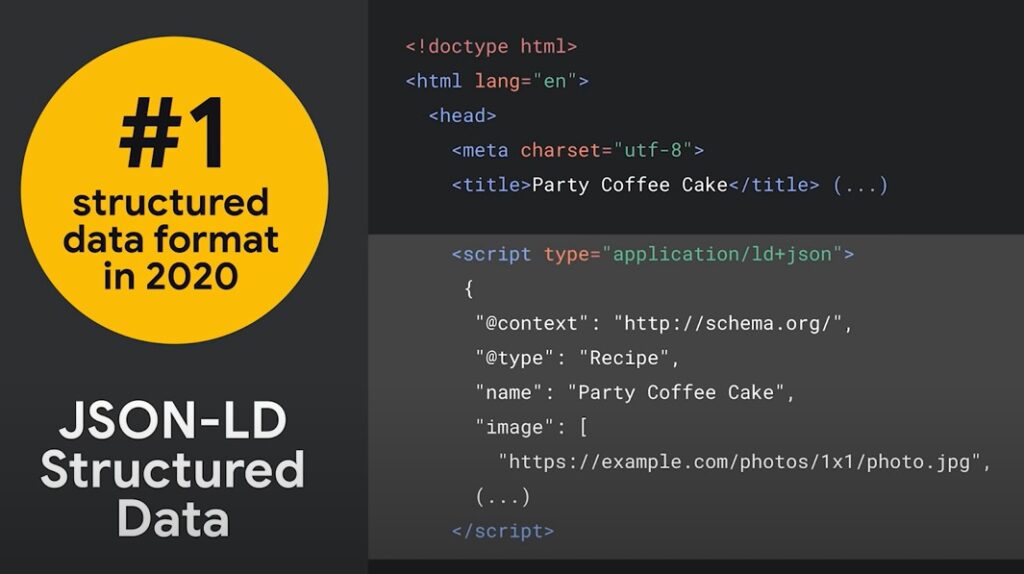

When it comes to machine-readable information on a page, Google primarily relies on structured data embedded in the HTML pages.

- JSON-LD has become the most popular way to provide structured data.

- All of Google’s modern search-specific metadata can be provided through JSON-LD and the schema.org vocabulary.

- Schema.org (started in 2011) is a globally accepted standard & open vocabulary for expressing information. It’s an important part of the open web infrastructure and is constantly being expanded.

- Google recently open-sourced Schemarama which provides data parsing and validation tools.

- To learn what you can do with structured data within Google Search, check the Search Gallery (a lot of new elements).

Links:

Structured data for videos

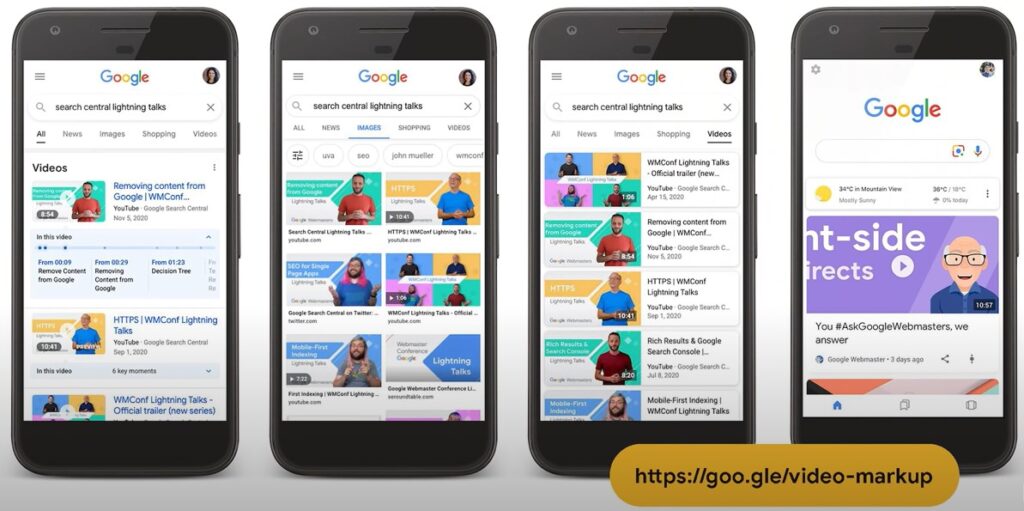

- Google has been making changes to how they recognize and show videos in Search.

- There are a few types of supported structured data that can be very interesting if embedding videos is important to your site.

- Videos and their landing pages are prominently shown in Search and Discover.

- You can host videos on your site or using any video hosting platform. Both approaches work similarly and are supported by Google Search.

Links:

Video key moments

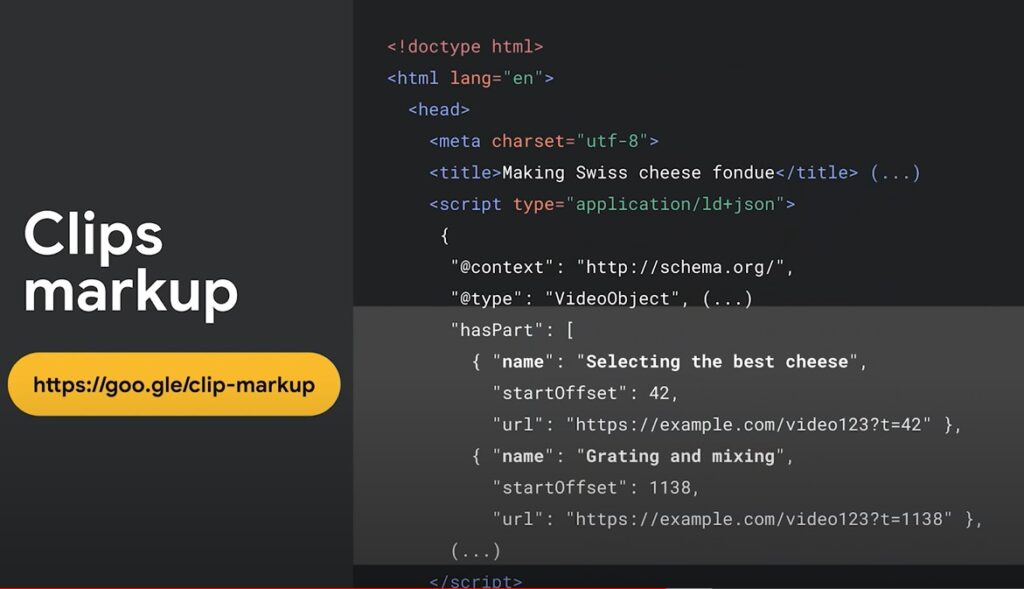

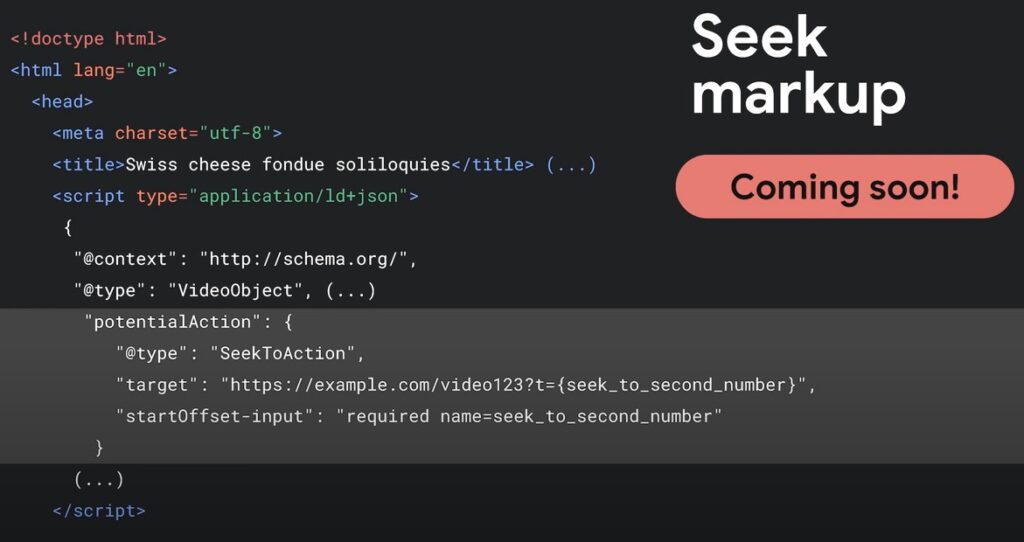

Search now allows users to directly skip to important moments in a video. Two types of markup will be supported to enable this:

- Clip markup (a web page can provide information on segments or clips within a video). These segments can then be shown directly in the search results. All you need to do is provide a textual tag, a starting time, and a URL that goes directly to that timestamp.

- Seek markup (if you cannot easily list the segment information for all of your videos). This markup will allow Google to use machine learning to analyze video content and automatically determine relevant segments. You just need to tell Google how to link to an arbitrary timestamp within the video hosted on your pages. Available in the near future.

Preparing for page experience signals

Page experience pillars

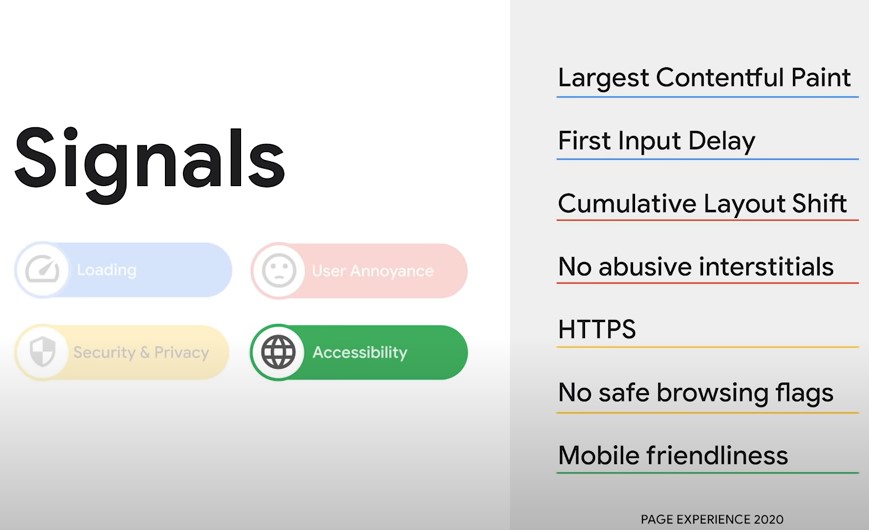

Google Search has always considered user experience as part of ranking.

- Mobile-friendliness as a ranking signal (2014)

- HTTPS (2014)

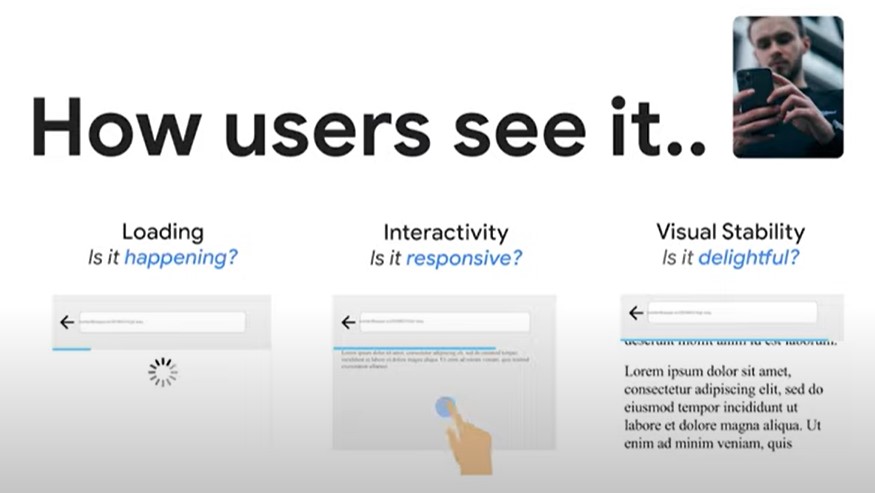

Page experience has four pillars of user experience:

- Loading (how fast or slow the resources of the page are downloaded and displayed on the user’s browser)

- User Annoyance (some of the webpage behavior that may get in the way of a user accomplishing a task)

- Security & Privacy (how safe, secure, and privacy-friendly a web page is)

- Accessibility (whether the site is accessible to all users, including those with disabilities). *15% of users worldwide have some form of disability.

These four pillars provide a structure of how you should think about the page experience for your site. Here is how these four pillars translate in practice into a page experience.

⚡ You may be interested in my guide to Google page experience and my Google page experience audit.

Loading

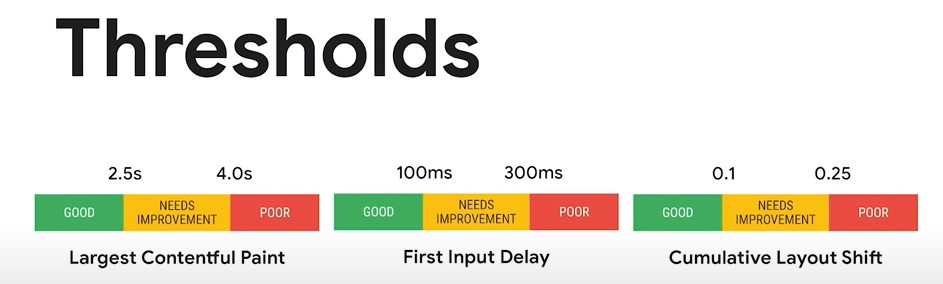

- Largest Contentful Paint (LCP) is a metric that reports the render time of the largest image or text block visible within the viewport and relative to when the page first started loading.

- First Input Delay (FID) is a metric that measures the time from when a user first interacts with a page (click on a link or tap on a button) to the time when the browser is actually able to begin processing the handlers in response to that interaction.

User Annoyance

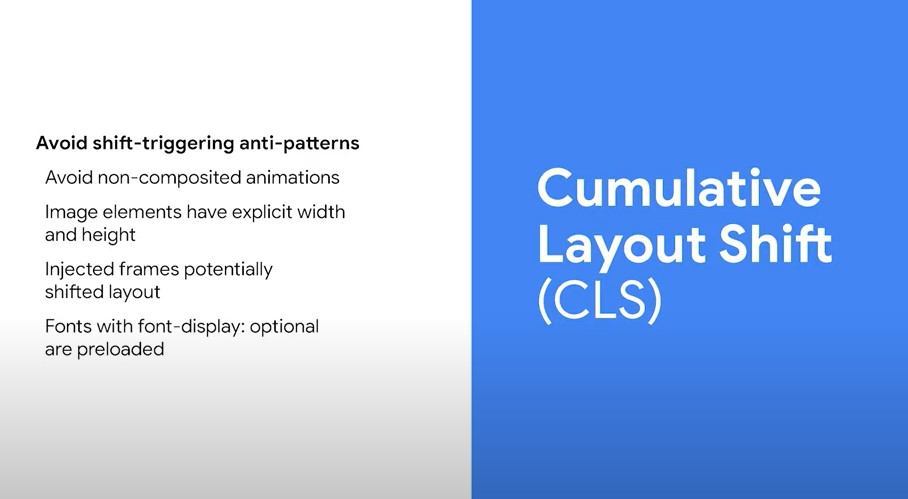

- Cumulative Layout Shift (CLS) measures the sum total of all the individual layout shift scores for every unexpected layout shift that occurs on the page. A layout shift occurs anytime a visible element changes its position from one rendered frame to the next.

- No intrusive interstitials is an existing Search ranking policy and an associated signal that detects the presence and use of interstitials that are user-hostile. Abusive interstitials are often used to trick users into doing something they don’t want to do and prevent them from reading or interacting with the page.

- Examples of good interstitials include GDPR consent or interstitial that shows updated business hours during the COVID-19 pandemic.

Security & Privacy

- HTTPS

- No Safe Browsing flags (malware, unwanted software downloads, or social engineering)

Every user deserves to have a safe and secure browsing experience. HTTPS and Safe Browsing work together to ensure that it happens.

Accessibility

- Mobile-friendliness (how effective the pages are on small screens)

Links:

Core Web Vitals (update to CLS)

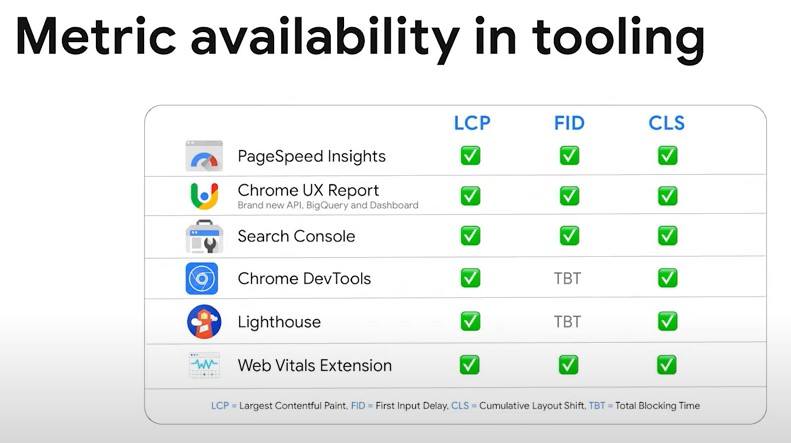

LCP, FID, and CLS are Core Web Vitals, a set of metrics that apply to all the web pages and should be measured by all site owners and will be surfaced across all the Google tools.

Core Web Vitals are a robust set of threshold guidance that map to user expectations.

Each Core Web Vitals metric:

- represents a distinct facet of user experience

- is measurable in the field

- reflects the real-world experience of a critical user-centric outcome

❗ How the Cumulative Layout Shift (CLS) metric is measured has been updated (previously it accounted for the entire life cycle of the page) and now the maximum duration of the window is five seconds. This is how CLS will be measured once it becomes a ranking signal.

⚡ Check my Core Web Vitals audit to learn how to audit Core Web Vitals on your site.

Top Stories Update

Together with the launch of the page experience ranking, the Top stories feature on Google search will also be updated:

- The eligibility requirement will be broadened to all qualifying pages (irrespective of their CWV score or page experience status) provided that they meet the Google News content policies.

- The page experience ranking will be used to ensure high-quality news articles are surfaced on mobile.

Page experience ranking coming to desktop

Page experience is critical to all users regardless of the device they are using. Updated guidance, documentation, and tools will be provided.

Prefetching

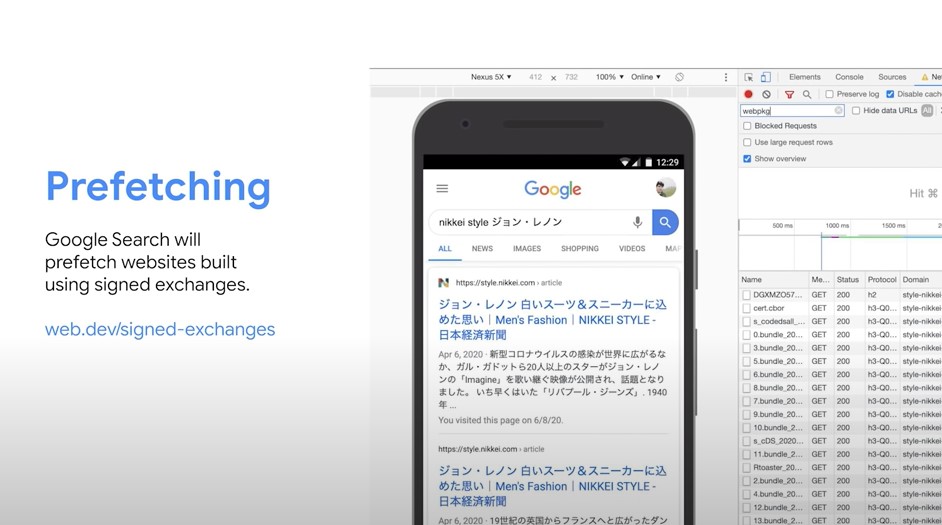

Google Search will prefetch websites built using signed exchanges.

- This is a way to improve the page performance of your users by taking advantage of prefetching on Google Search via the use of signed exchanges.

- Pages are prefetched and stored on the user’s browser, ready to be loaded when the user clicks on the result, leading to near-instant loading.

- This is possible through the use of Google’s fast cache servers distributed around the world.

Links:

Google Search Console

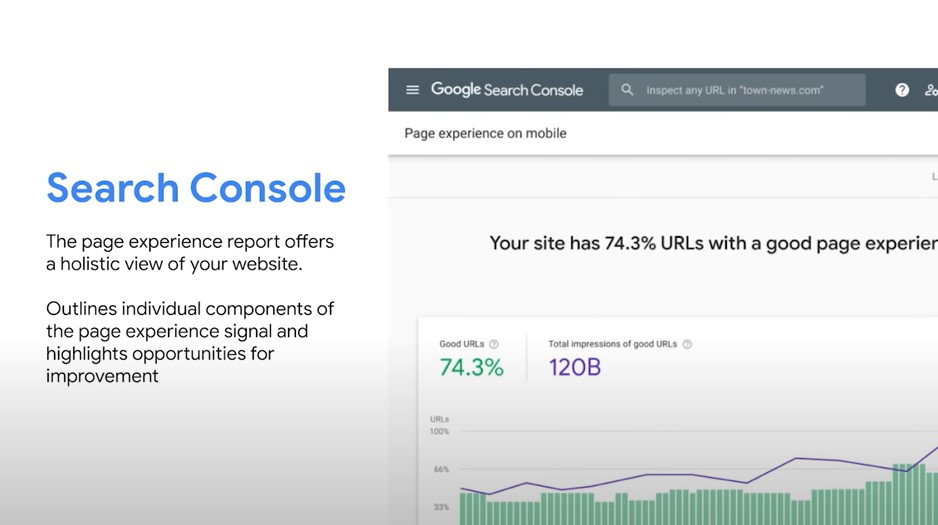

The new Search Console Page Experience report offers a holistic view of your website.

- It outlines individual components of the page experience signals and shows areas for improvement.

- The Search Performance report has been updated to allow for filtering pages with a good page experience.

Links:

What’s new in Web Vitals

Web Vitals are metrics for web pages with a focus on user experience. They measure problems that frustrate users like poor performance or content shifting around.

- Core Web Vitals is a subset of Web Vitals considered most important to focus on.

- Core Web Vitlas apply to all web pages and are surfaced across all Google tools.

- They are measurable in the field so you can get a ground truth of what real users see.

- They focus on the critical user-centric outcome (a small set of metrics).

Google tries to keep the Core Web Vitals to a small set of metrics to make it easiest to focus on the most important things. Why?

- To make it easier for developers to focus on the user experiences that matter.

- To make the web a better place for users.

Metrics and Tooling Updates

There are two amazing resources to pull from to learn about how users are interacting and experiencing the page:

- Lab data (synthetically collected in a testing environment is critical for tracking down bugs and diagnosing issues because it is reproducible and has an immediate feedback loop)

- Field data (allows you to understand what real-world users are experiencing, conditions that are impossible to simulate in the lab).

Either set of metrics taken in isolation aren’t nearly as powerful as when they are combined.

- Field data are recorded from real users on their real devices.

- Every time your users load your page, it adds a single data point to this set.

- Because of this, a single field metric represents all of your users (thousands of data points, variable cache conditions, network, and device environments).

- Real-world data present you with all sorts of variables and unknowns.

- When you are trying to optimize based on data that represents so many different conditions, it’s difficult to know where to start. And that’s when synthetic or lab testing becomes so useful.

- When you run Lighthouse on your page and get an LCP value, it is a single data point collected in real-time for you, calibrated to represent a user in your upper percentiles.

- This allows you to use a single set of values as representative of your user’s experience on your page so that you can dive deep and debug against it.

If you’re optimizing against your Lighthouse Performance Score (which is calibrated to be representative of your upper percentiles), you are optimizing for the majority of visitors to your page.

- The Lighthouse Performance Score is a tool to prepare yourself to succeed with users in the real world in dimensions of quality they care about.

- The closer you are to a 100/100 score, the less you’re leaving to chance for what can go wrong in the field.

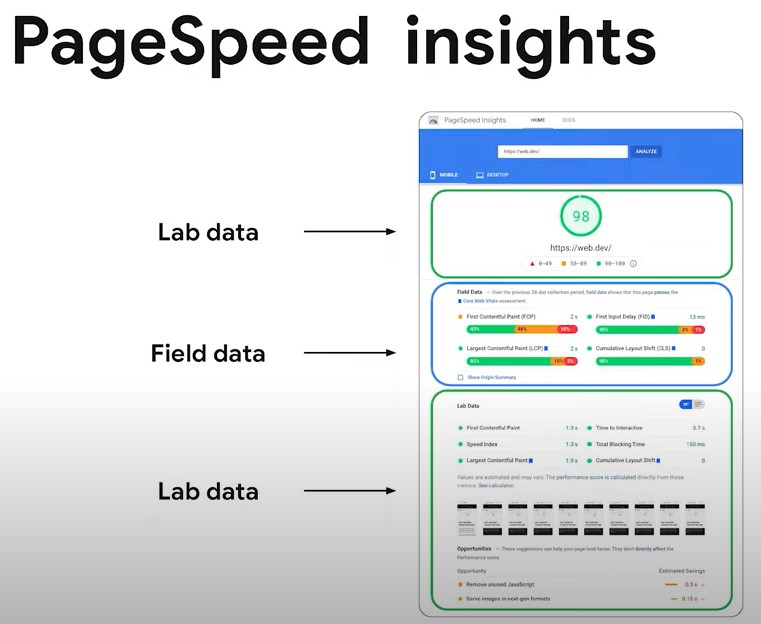

Google PageSpeed Insights

At the top of Google PageSpeed Insights, there is the Lighthouse score in the big score gauge.

- It’s a weighted, blended combination of user-centric metrics collected in a lab setting. It includes all the six lab metrics detailed in the Lab Data section.

- The goal of this high-level performance score is to make sure you have the ability to quickly assess at a glance how well your page is likely to deliver a good experience in real-world conditions with your users.

The Field data section is sourced from the Chrome User Experience Report (CrUX).

- This is the URL-level data that show how many page loads over the previous month offered users who visited the URL a good experience, or one that needed improvement.

- You can also see the Core Web Vitals assessment marked by the blue ribbon.

- Below the URL-level data, Origin data is also often available to show you the same insights but across the entire origin as opposed to just the URL.

❗ It’s OK if the numbers between the lab and the field don’t match. They are giving you different information: one which is useful to debug, another which is useful to validate how your users are experiencing your site.

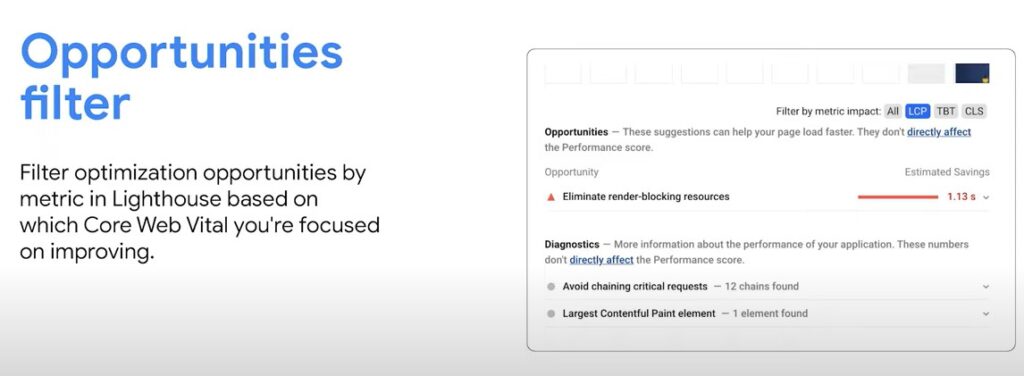

UPDATE: Opportunities filter coming to Google PageSpeed Insights. It will allow you to filter opportunities to improve the specific metric you are working on.

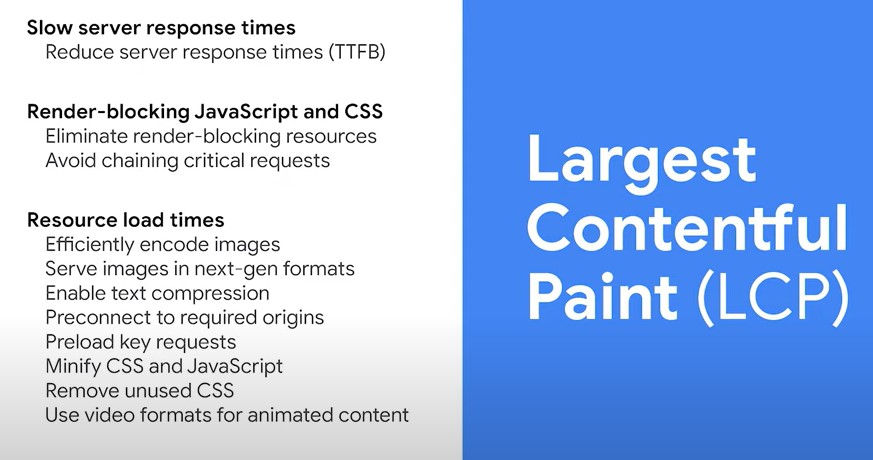

Largest Contentful Paint (LCP)

Largest Contentful Paint (LCP): When is the main content loaded?

- LCP is a good proxy for long it took until the user could see the main content of the page.

- It’s important to remember to look at LCP in the field.

- Updates to LCP include ignoring background images and better carousel handling.

- Optimizing LCP can have a huge impact for users.

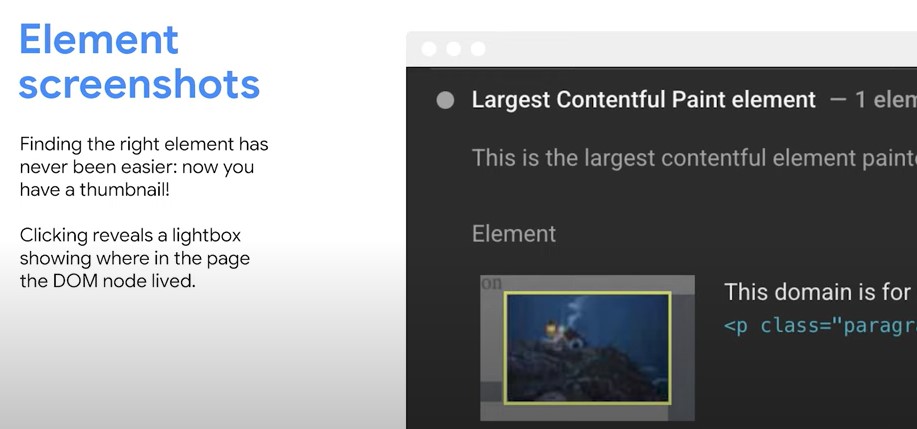

Lighthouse makes it easy to isolate which element to optimize. With element screenshots, a full-page screenshot is taken so that viewing DOM elements and their details is easy.

Cumulative Layout Shift (CLS)

Cumulative Layout Shift (CLS): Avoid unexpected content shifts

- CLS measures how much and how often content unexpectedly shifts around on the page. This can be a really frustrating experience for users.

- CLS is measured throughout the whole lifetime of the page.

- A user who loaded the page and navigated away quickly will have a good score.

- But the user who scrolls through the whole article (and images and ads pop up) will have a poor score.

- Make sure to check your CLS score in the field to endure users aren’t having problems after the page finishes loading.

- Improving CLS can have a big impact for your users (more page views per session, longer session duration)

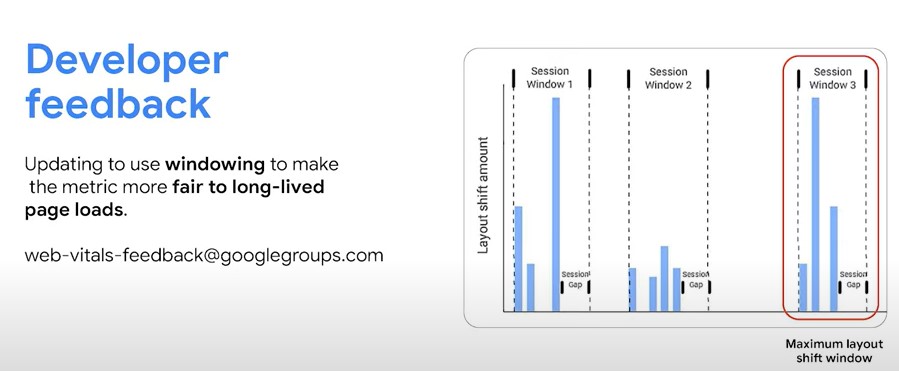

UPDATE: Updating to use windowing to make the metric more fair to long-lived page loads.

There are some cases where the page can be open for a very long time and the score can increase too much. That’s why there is now the window which captures the most frustrating burst of layout shift in the page.

First Input Delay (FID)

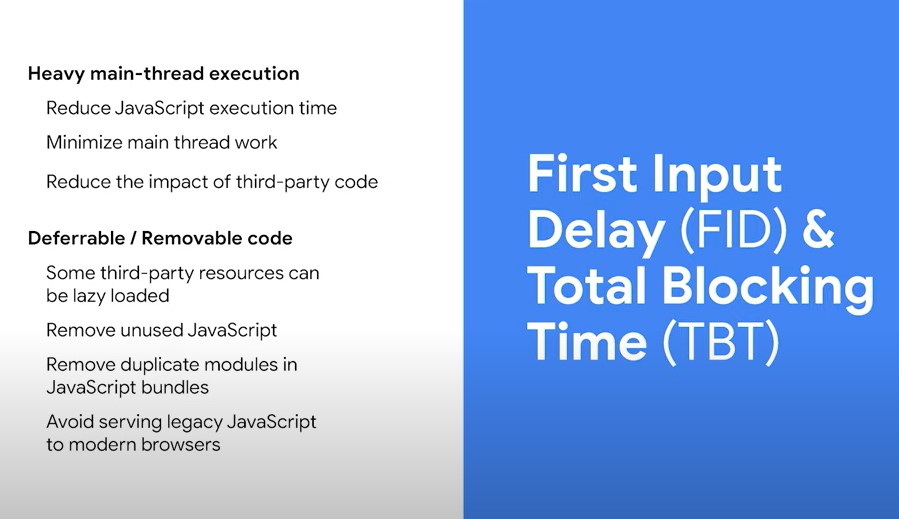

First Input Delay (FID). How long does it take to interact?

- FID measures the time it takes between the user tapping or pressing a key and the browser being able to process that input.

- Long-running JavaScript on the main thread is usually the biggest problem that makes FID go up and slows down the page for users.

Example: The user is trying to tap on the menu, but the main thread is blocked by JavaScript running on the page, so the browser has to wait until all the JavaScript is finished. That makes the menu take a really long time to open.

Lab vs field data

- For real users, the first input can occur at many different times during page load.

- It’s recommended to reduce long-running JavaScript on the main thread so that it’s never busy when the user tries to click.

- That’s why there is the lab metric Total Blocking Time (TBT) that will help you understand and reduce the main thread blocking time.

- FID is only measurable in the field but you can use Total Blocking Time as a proxy lab metric that allows you to debug and improve your interactivity in the lab before your users ever have to experience a bad FID.

- The Lighthouse report lets you find opportunities to optimize your main thread execution times and potentially defer or remove portions of code entirely.

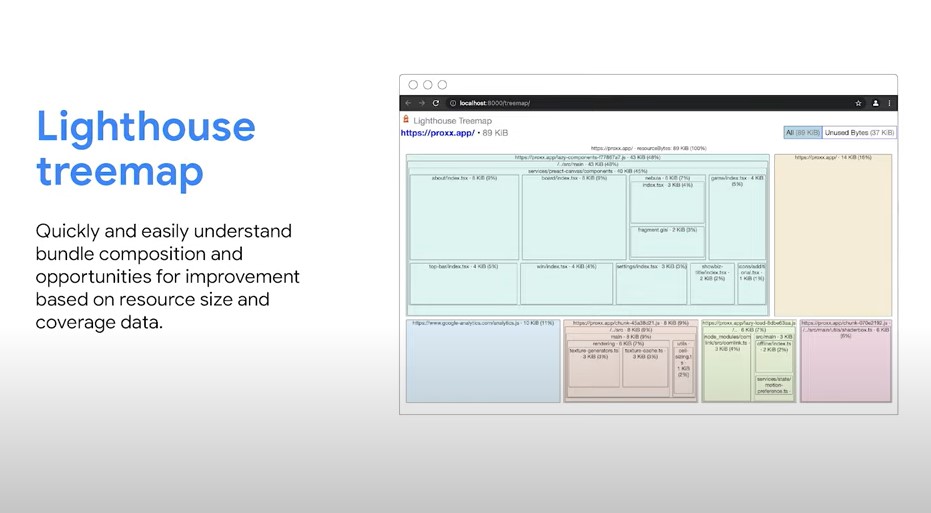

❗ A new feature Lighthouse treemap will let you easily understand bundle composition and explore opportunities for improvement mashed on both resource sizes and coverage.

Core Web Vitals are in all developer tools.

❗ Check my list of tools to measure Core Web Vitals.

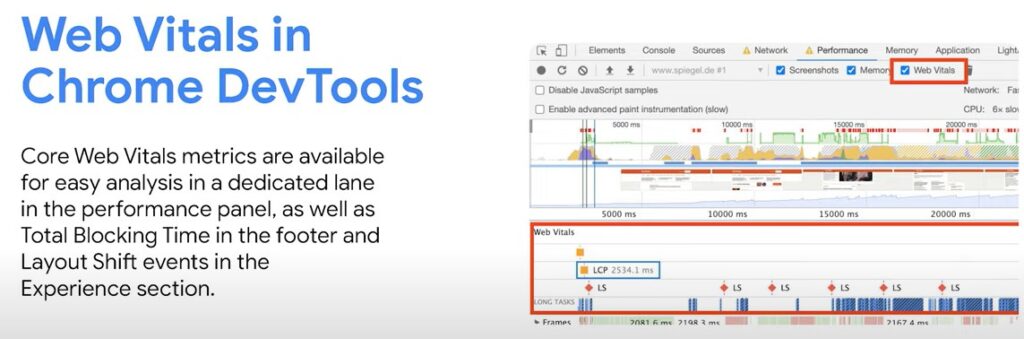

Web Vitals in Chrome Dev Tools

- There is a dedicated lane in the performance panel in Chrome DevTools that allows you to isolate exactly when critical Web Vitals timings are being measured.

- You can see these timings in the context of your overall waterfall which can be critical to diagnosing many factors that may be affecting your performance.

- Total Blocking Time is also visible clearly in the footer. Layout shifts can be teased apart and analyzed in the Experience section.

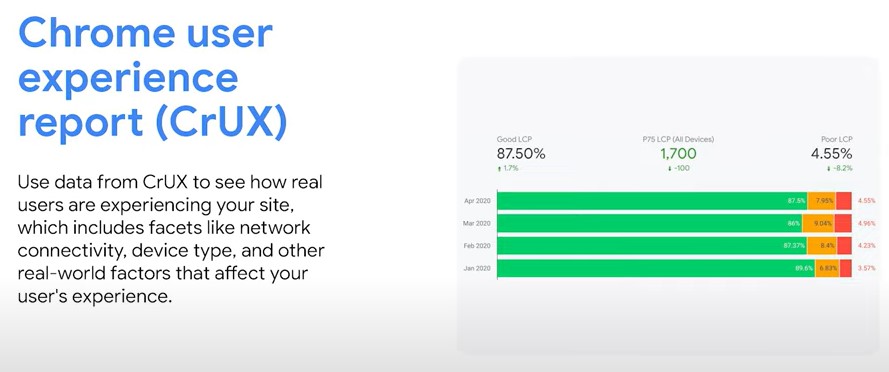

Core Web Vitals in Chrome User Experience REPORT (CrUX)

- It’s critical to be leveraging field data to understand how real users are experiencing your site.

- CrUX is powered by real user measurement of key user experience metrics across the public web, aggregated from users who have opted in.

- Using CrUX allows you to further isolate what needs your attention most with regards to how you can improve your site experience for users.

Links:

Business Impact of Core Web Vitals

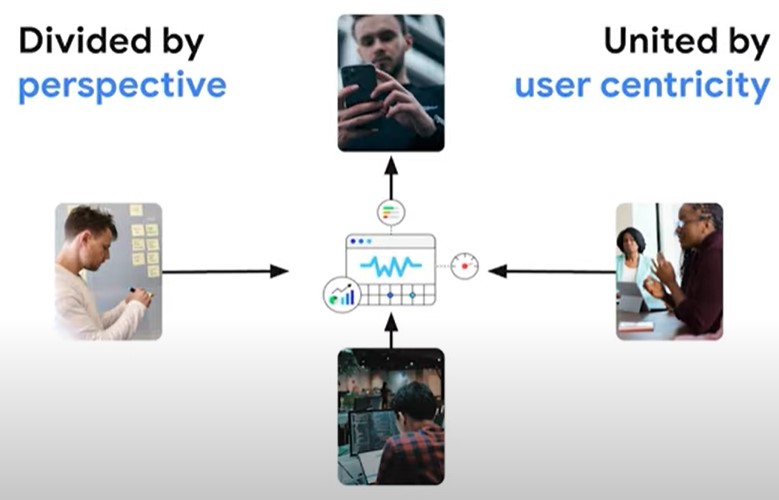

Here some nice screenshots I thought I would share with you. It’s about how different people look at Core Web Vitals.

You will also be interested in these articles:

Olga, thank you for summary. It saved a lot of my time. I am sharing this valuable post.

Hi Roman, thank you for stopping by. It’s great you like my notes! 🙂

Hi, Olga.

This post is very well written & easy to understand, thank you!

Hello Stephen, thank you 🙂

Thank You Olga. Well done, quite a lot of details about CVW. 🙂

You are welcome 🙂

Wowwww the best curated informational post about this event, I have signed up for this but not able to watch it, you pretty much covered everything and saved a lots of time.

Will go through this again and again with sources, love to share this content with my team.

Hello Leona! Thanks for a nice comment. I am happy you liked my notes even though they are quite long and detailed.

Hi,

I am reading your content for the first time, but, you did hell of a job.

Kudos!!

Hello Gajendra 🙂 Thank you!

I have a question about CWV, we know that first time visits have lower scores in terms of speed.

Does google consider all visits or just those who visited the page for the first time?

Hey Olga,.

your post is very helpful and informative.

Your summary will save the time and not get bored.Your all suggestions are very good and I also gain some new things from your post.

Thank you

Sweety

Great article 👍. Thank you!

Hi Olga,

Thank you for this detailed post. I wanted to ask does prefetching works when a page has noarchive tag on it?

Thanks

Google IO is a “must have” knowledge base for upcomming year in SEO. Good article!

Couldn’t agree more! 🙂